Upgrade & Secure Your Future with DevOps, SRE, DevSecOps, MLOps!

We spend hours on Instagram and YouTube and waste money on coffee and fast food, but won’t spend 30 minutes a day learning skills to boost our careers.

Master in DevOps, SRE, DevSecOps & MLOps!

Learn from Guru Rajesh Kumar and double your salary in just one year.

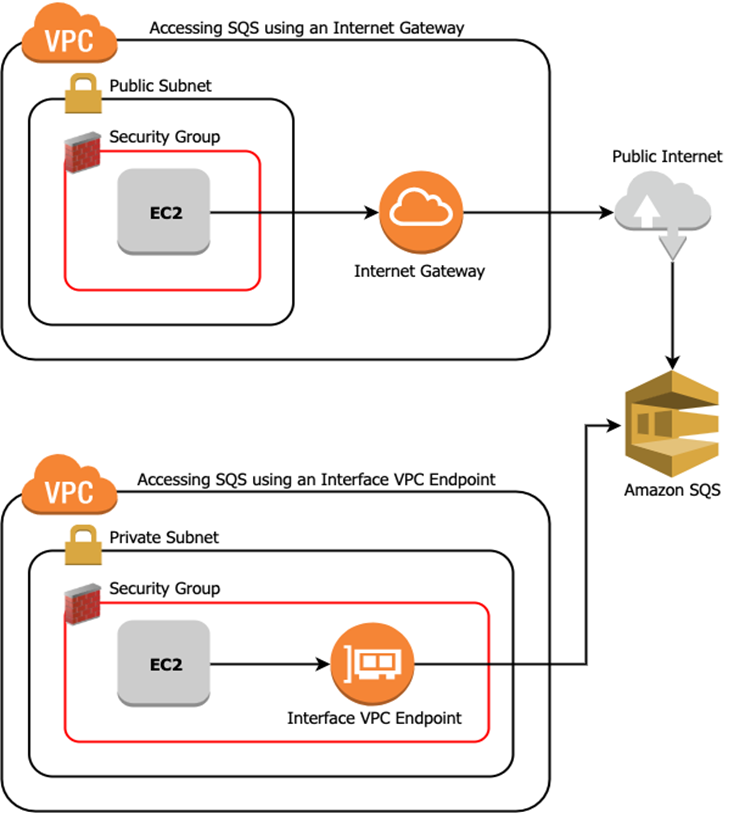

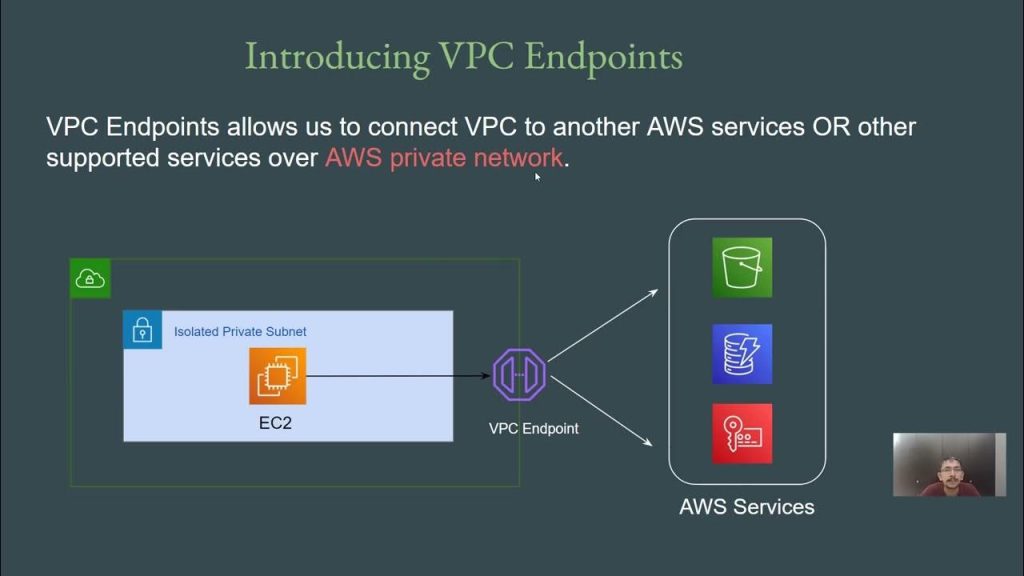

AWS Virtual Private Cloud (VPC) endpoints represent a pivotal feature for organizations aiming to establish secure and private connectivity between their VPCs and a wide array of AWS services, as well as services hosted by other AWS customers and partners. These virtual devices enable Amazon VPC instances to communicate with resources of the service without the necessity of public IP addresses. This mechanism ensures that the traffic between an Amazon VPC and a service does not leave the Amazon network, thereby significantly enhancing the security posture of cloud deployments.

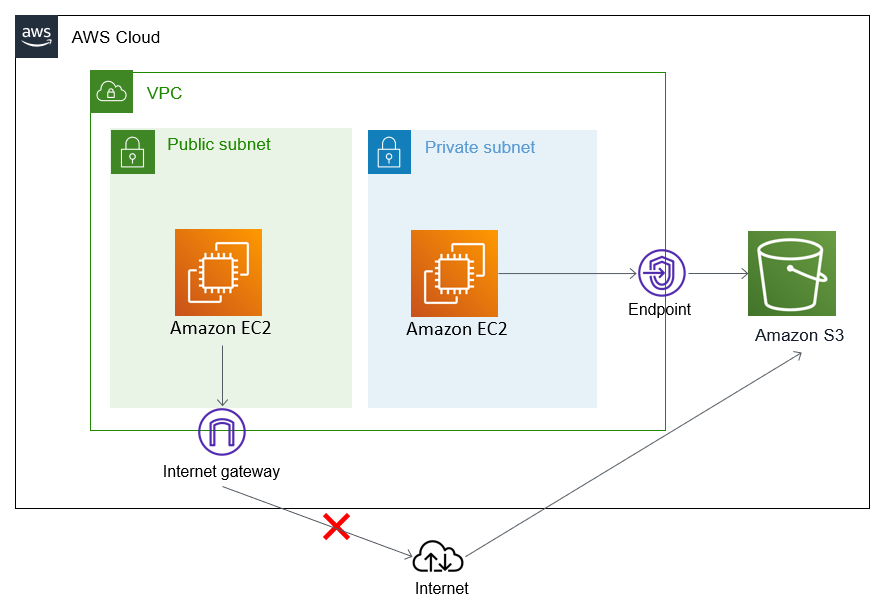

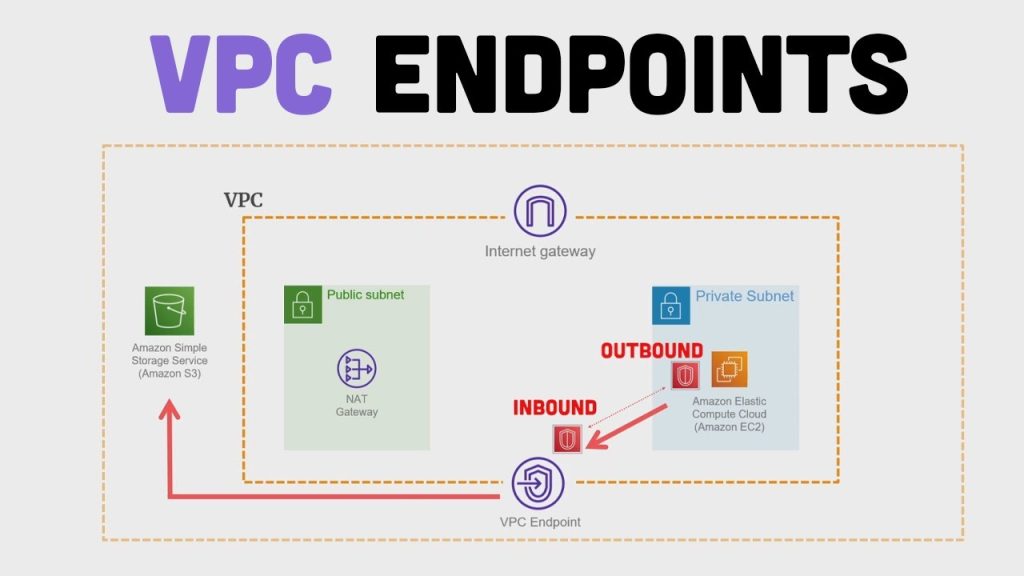

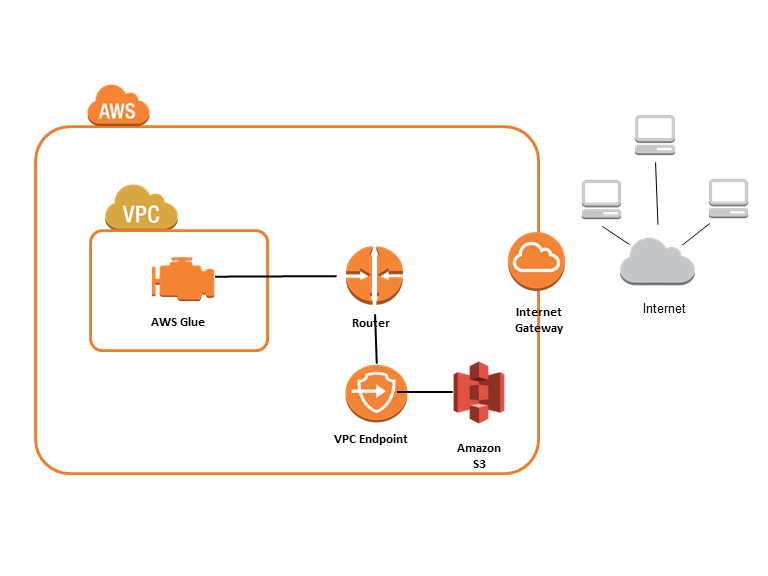

The emergence of VPC endpoints addresses a fundamental challenge in cloud networking: the need for private communication between resources within a VPC, particularly those residing in private subnets, and essential AWS services. Instances located in private subnets, by their design, lack direct access to the public internet, which traditionally complicates their interaction with external resources, including AWS services like Amazon Simple Storage Service (S3). While solutions like Network Address Translation (NAT) gateways can provide internet access to private subnets, they still route traffic over the public internet before it reaches the intended AWS service. VPC endpoints offer a more direct and secure pathway, ensuring that this communication remains within the confines of the AWS network infrastructure.

The strategic importance of VPC endpoints in secure cloud networking cannot be overstated. By ensuring that traffic to AWS services stays within the AWS network, organizations can significantly reduce their exposure to potential security threats prevalent on the public internet. Furthermore, VPC endpoints often simplify network architecture by eliminating the need for complex routing configurations, NAT gateways, and extensive IP whitelisting, contributing to a more manageable and secure cloud environment. This capability is particularly vital for enterprises handling sensitive data and adhering to stringent regulatory compliance standards.

Understanding the Fundamentals of VPC Endpoints

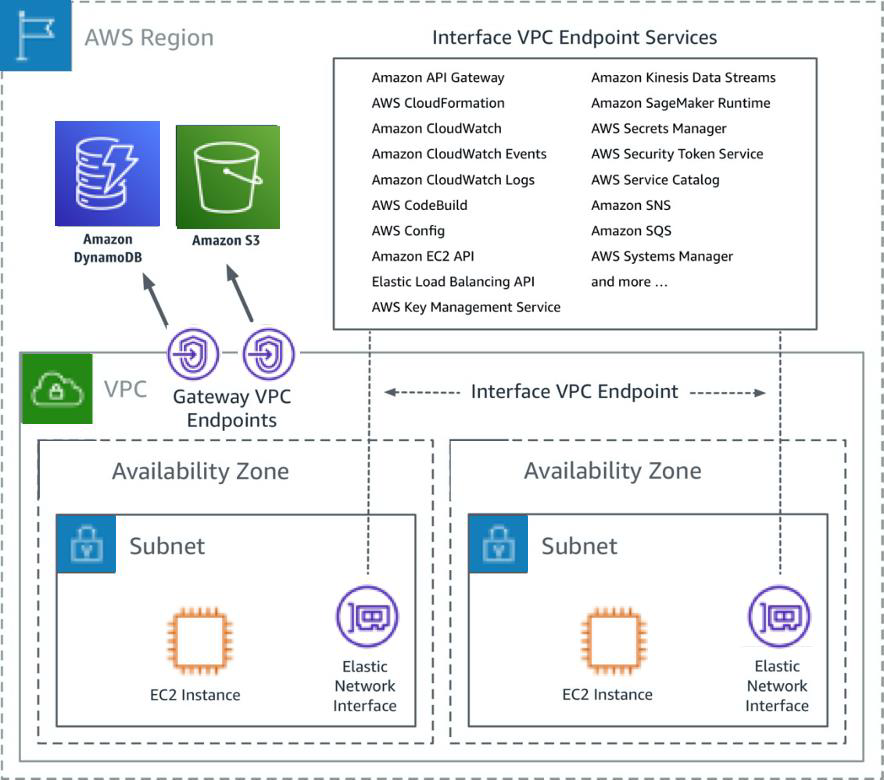

At the core of AWS VPC endpoints lies the concept of private connectivity, allowing access to AWS services through the use of private IP addresses within a VPC. This ensures that the communication path remains within the defined private network space, bolstering security and control over data flow. A significant advantage of VPC endpoints is the elimination of the requirement for an internet gateway to facilitate communication with supported services. This simplifies the network topology and removes a potential point of exposure to the public internet. VPC endpoints themselves are virtual devices, engineered by AWS to be horizontally scaled, redundant, and highly available. This inherent reliability and scalability are managed by AWS, ensuring consistent performance without the need for user intervention. When discussing VPC endpoints, particularly in the context of AWS PrivateLink, it is essential to differentiate between the service consumer, which creates the endpoint to access a service, and the service provider, which hosts and exposes the service. This distinction is crucial for understanding how various AWS services and third-party offerings can be accessed privately.

The benefits of utilizing VPC endpoints are manifold. Enhanced security is paramount, as traffic remains within the AWS network, significantly reducing the risk of interception or compromise on the public internet. Moreover, by bypassing the public internet, VPC endpoints contribute to reduced latency in accessing AWS services, leading to improved application performance. The dedicated and private connection within the AWS infrastructure also facilitates increased throughput for data transfer, which is particularly beneficial for applications dealing with large datasets. The simplified network configuration, achieved by avoiding complex routing rules and NAT gateways, translates to easier setup and management of connectivity to AWS services. While interface endpoints have associated costs, VPC endpoints, especially gateway endpoints for S3 and DynamoDB, can potentially lead to overall cost savings by eliminating data transfer charges associated with public internet routing.

Despite their numerous advantages, VPC endpoints also have certain limitations. Not all AWS services support VPC endpoints; gateway endpoints are primarily limited to S3 and DynamoDB, while interface endpoints, although supporting a broader range, do not cover every AWS service. It is also important to note that gateway endpoints for S3 and DynamoDB do not utilize AWS PrivateLink, which offers more advanced features for private connectivity compared to the route-based mechanism of gateway endpoints. Gateway endpoints function by targeting specific IP routes in a VPC route table, using prefix lists to direct traffic to S3 or DynamoDB. Finally, interface endpoints incur hourly charges per Availability Zone and data processing fees, which need to be considered in the overall cost analysis.1

Types of VPC Endpoints: Interface vs. Gateway

AWS offers two main types of VPC endpoints to cater to different connectivity needs: Interface Endpoints and Gateway Endpoints.

Gateway Endpoints, in contrast, operate at the routing layer. They target specific IP routes within your VPC route table, utilizing a prefix list to direct traffic intended for Amazon S3 or Amazon DynamoDB. This mechanism is simpler to configure for these specific services as it does not involve the creation of ENIs. However, it is important to note that gateway endpoints are primarily limited to supporting only Amazon S3 and Amazon DynamoDB. The primary use cases for gateway endpoints involve securely accessing S3 buckets for object storage and DynamoDB tables for NoSQL database operations from instances residing in private subnets, without the need for internet access.

Practical Use Cases of AWS VPC Endpoints

The versatility of AWS VPC endpoints makes them applicable across numerous scenarios and industries. Here are ten practical use cases:

- Securely Accessing S3 from Private Subnets: Organizations can enable instances in their private subnets to securely access S3 buckets for storing backups, logs, or application data without requiring public IP addresses or routing traffic through an internet gateway.2 This ensures that sensitive data transferred to and from S3 remains within the AWS network.

- Securely Accessing DynamoDB from Private Subnets: Applications running on instances within private subnets can privately communicate with DynamoDB tables for read and write operations, ensuring that database traffic does not traverse the public internet, enhancing the security of sensitive application data.4

- Private Connectivity to SaaS Applications via AWS PrivateLink: Businesses can establish secure and private connections to Software-as-a-Service (SaaS) applications hosted on AWS that are exposed through AWS PrivateLink.10 This allows for seamless integration with third-party services without the security risks associated with public internet exposure.

- Centralized Shared Services VPC: Large organizations with multiple VPCs can create a centralized VPC hosting shared services such as logging, monitoring, security tools, and identity management, and then provide private access to these services from other VPCs using interface endpoints powered by AWS PrivateLink.10 This simplifies management and enhances security across the entire AWS infrastructure.

- Hybrid Connectivity to On-Premises Services: VPC endpoints, in conjunction with AWS Direct Connect or a VPN connection, can facilitate secure and private communication between applications running in AWS and services hosted in on-premises data centers.10 This is crucial for organizations adopting a hybrid cloud strategy.

- Presenting Microservices via AWS PrivateLink: In a microservices architecture where different services are deployed in separate VPCs, AWS PrivateLink can be used to enable private and secure communication between these services, enhancing the overall security and isolation of the application.10

- Inter-Region Access to Endpoint Services: For organizations with a global footprint, VPC endpoints, along with inter-Region VPC peering, can enable private access to services hosted in a different AWS Region, ensuring secure and low-latency communication across geographical boundaries.10

- Securely Accessing AWS Management Console Services: While primarily focused on data plane access, interface endpoints can also secure access to certain AWS management services like CloudWatch Logs and AWS CloudTrail, ensuring that administrative traffic remains within the AWS network.22

- Accessing AWS Step Functions Workflows: Applications within a VPC can privately interact with AWS Step Functions workflows using interface endpoints, allowing for secure orchestration of distributed applications without exposing traffic to the public internet.20

- Connecting to Amazon Managed Blockchain: Organizations utilizing Amazon Managed Blockchain can create interface VPC endpoints to establish secure and private connections to their blockchain network nodes, ensuring that sensitive blockchain-related traffic remains within the AWS private network.23

AWS Services Powering VPC Endpoints

The creation and management of AWS VPC endpoints involve several key AWS services working in concert. The foundational service is Amazon Virtual Private Cloud (VPC) itself, which provides the isolated virtual network where VPC endpoints are provisioned and managed. AWS PrivateLink serves as the underlying technology for interface VPC endpoints, enabling private connectivity between VPCs and a multitude of services without exposing traffic to the public internet. AWS Identity and Access Management (IAM) plays a crucial role in controlling access to VPC endpoints and defining endpoint policies that govern which principals can access specific services through these endpoints.6 Optionally, Amazon Route 53 can be used when enabling private DNS for interface endpoints, facilitating the resolution of public service endpoints to the private IP addresses of the endpoint network interfaces within your VPC.6

For interface VPC endpoints, Security Groups are associated with the elastic network interfaces to control inbound and outbound traffic at the instance level, acting as virtual firewalls. Additionally, Network Access Control Lists (NACLs) can be optionally configured at the subnet level to provide a stateless firewall, controlling traffic flow for both interface and gateway endpoints. Finally, for service providers looking to offer private access to their services via AWS PrivateLink, the Network Load Balancer (NLB) is a key component in creating scalable and highly available endpoint services.

Step-by-Step Guide to Creating VPC Endpoints

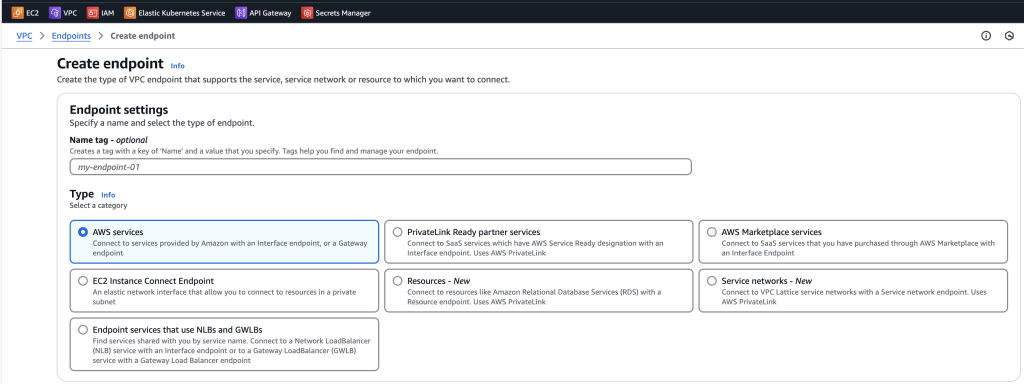

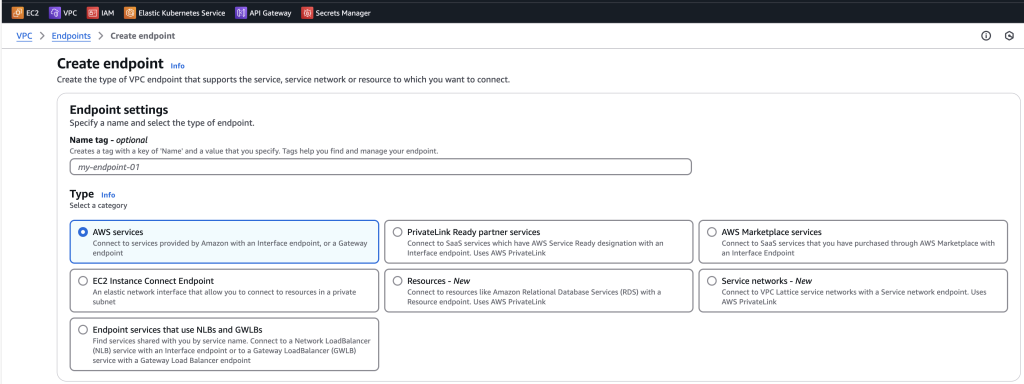

Creating AWS VPC endpoints is a straightforward process within the AWS Management Console.

Creating an Interface VPC Endpoint:

- Navigate to the AWS VPC console and select Endpoints from the navigation pane.16

- Click on the Create endpoint button.16

- For Type, choose AWS services.16

- In the Service name dropdown, select the desired AWS service you wish to connect to.16

- Select your target VPC from the dropdown list.16

- Choose the specific Subnets within your VPC where you want to create the endpoint network interfaces. It is recommended to select one subnet per Availability Zone for high availability.16

- Select the appropriate IP address type: IPv4, IPv6, or Dualstack, based on your subnet configurations and the service’s support.16

- Choose the Security groups that you want to associate with the endpoint network interfaces. Ensure these security groups allow traffic from your VPC resources to the selected AWS service on the necessary ports.16

- Under Policy, you can optionally configure an endpoint policy. You can choose Full access to allow all operations or Custom to specify a more restrictive policy.16

- You can optionally add Tags to your endpoint for better organization and management.16

- Finally, click the Create endpoint button to provision your interface VPC endpoint.16

Creating a Gateway VPC Endpoint (for S3 or DynamoDB):

- Navigate to the AWS VPC console and select Endpoints from the navigation pane.2

- Click on the Create endpoint button.2

- For Service category, select AWS services.2

- In the Service section, search for either Amazon S3 or Amazon DynamoDB. Ensure that the Gateway type is automatically selected.2

- Choose your target VPC from the dropdown list.2

- In the Route tables section, select the route tables associated with the subnets that need access to the gateway endpoint. A route will be automatically added to these tables to direct traffic to the endpoint.8

- Under Policy, you can optionally configure an endpoint policy. You can choose Full access or Custom to restrict access to specific S3 buckets or DynamoDB tables.2

- You can optionally add Tags to your endpoint.2

- Click the Create endpoint button to provision your gateway VPC endpoint.2

Securing Your Endpoints: Configuring Security Groups and NACLs

Securing VPC endpoints involves properly configuring both security groups and Network Access Control Lists (NACLs). For interface endpoints, you must associate security groups with the endpoint network interfaces.6 These security groups should be configured with inbound rules to allow traffic from your VPC resources to the endpoint service on the necessary ports, such as HTTPS on port 443 for many AWS services.7 Since security groups are stateful, outbound rules are generally not required for the endpoint’s security group itself.7 However, it is crucial to ensure that the security groups associated with your VPC resources (like EC2 instances or Lambda functions) allow outbound traffic to the private IP addresses of the interface endpoint.

NACLs provide an additional layer of security at the subnet level for both interface and gateway endpoints. NACLs are stateless, meaning you need to configure both inbound and outbound rules.7 First, identify the NACLs that are associated with the subnets where your VPC endpoints are created.7 Then, configure inbound rules on the NACL to permit traffic from your VPC resources to the endpoint service on the required ports.7 Finally, configure outbound rules on the NACL to allow the return traffic from the endpoint service back to your VPC resources. For return traffic, it is often necessary to allow traffic on ephemeral ports (1024-65535) as these are typically used for response traffic.7

Navigating the Costs: Understanding VPC Endpoint Pricing Models

Understanding the pricing models for different types of VPC endpoints is essential for cost management. Interface Endpoints incur an hourly charge for each endpoint provisioned in each Availability Zone.1 Additionally, there are data processing charges based on the volume of data that flows through the endpoint, with tiered pricing that can reduce the cost per GB as usage increases.1 For interface endpoints used for cross-region connectivity, standard PrivateLink charges for data processing and hours apply, along with AWS cross-region data transfer rates.3

| Data Processed per month in an AWS Region | Pricing per GB of Data Processed (USD) | Region (Example: US East (Ohio)) Pricing per VPC endpoint per AZ (USD/hour) |

| First 1 PB | 0.01 | 0.01 |

| Next 4 PB | 0.006 | 0.01 |

| Anything over 5 PB | 0.004 | 0.01 |

In contrast, Gateway Endpoints for Amazon S3 and Amazon DynamoDB generally do not have any additional charges associated with the endpoint itself. Users are only billed for the standard data transfer and resource usage costs for S3 and DynamoDB. Gateway Load Balancer Endpoints, used for integrating network and security appliances, follow a pricing model similar to interface endpoints, with an hourly charge per endpoint per Availability Zone and a per GB data processing charge.3 Resource Endpoints, designed for accessing shared resources in other VPCs, also have an hourly charge per endpoint, along with tiered data processing charges based on monthly usage.3

For cost optimization, organizations should consider centralizing VPC endpoints in a shared services VPC, especially in multi-account environments, to reduce the overall number of endpoints.1 Utilizing gateway endpoints for S3 and DynamoDB whenever possible is also a cost-effective strategy as they do not incur direct endpoint charges.2 Carefully evaluating the number of Availability Zones needed for interface endpoints can help balance cost and availability requirements.2 Monitoring data processing through interface endpoints can identify potential areas for optimization.1 Finally, comparing the costs of interface endpoints with alternative solutions like NAT Gateways, based on specific traffic patterns and volumes, is crucial for making informed decisions.2

Best Practices for Implementation and Management

Effective implementation and management of VPC endpoints require adherence to several best practices. It is crucial to choose the right VPC endpoint type based on the specific AWS service being accessed and the requirements for security and features.3 For most services needing private connectivity, interface endpoints are suitable, while gateway endpoints are best for S3 and DynamoDB when PrivateLink features are not necessary. Gateway Load Balancer Endpoints should be considered for network security and inspection purposes.3

Applying the principle of least privilege is paramount. Endpoint policies, security groups, and NACLs should be configured to allow only the necessary traffic and actions, minimizing the potential impact of any security breaches.6 Continuous monitoring and logging of endpoint activity using AWS CloudWatch and AWS CloudTrail are essential for troubleshooting performance issues and auditing for security purposes.1 For interface endpoints, deploying them across multiple Availability Zones ensures high availability and redundancy.1 Implementing proper naming and tagging conventions for VPC endpoints improves organization and simplifies management.1 Regularly reviewing and updating the configurations of VPC endpoints, security groups, NACLs, and policies is necessary to ensure they continue to meet evolving application needs and security standards.3 In large or multi-account environments, adopting a centralized VPC endpoint architecture can lead to significant cost optimization and simplified management.1 Finally, enabling VPC Flow Logs for the subnets where endpoints are located provides valuable insights into network traffic patterns, aiding in troubleshooting and security analysis.

Extending Boundaries: Cross-Account Access with VPC Endpoints

VPC endpoints can also be leveraged to facilitate secure cross-account access to AWS services. Interface endpoints can be shared across different AWS accounts using AWS Resource Access Manager (RAM).9 This allows resources in a different account to privately access services through the shared endpoint without the need for public internet access. However, gateway endpoints for S3 and DynamoDB cannot be directly shared across accounts.5 In such cases, the gateway endpoint needs to be created in the account where the resource accessing the service resides. Cross-account access to the S3 buckets or DynamoDB tables themselves is then managed through IAM policies.5

Cross-account IAM roles are fundamental for granting permissions to resources in one AWS account to access services in another account, even when utilizing VPC endpoints.4 The resource in the accessing account assumes a role in the target account, gaining temporary credentials to interact with the service. If resources in a private subnet need to access services in another account via a VPC endpoint and also need to assume a cross-account IAM role, an interface VPC endpoint for AWS Security Token Service (STS) might be required in the consumer account’s VPC.5 This ensures that the STS call to assume the role also happens privately within the AWS network, especially if the private subnet lacks direct internet access.

Conclusion

AWS VPC endpoints are a cornerstone of secure and efficient cloud networking, providing a private and reliable means for accessing a wide range of AWS services without exposing traffic to the public internet. By understanding the nuances between interface and gateway endpoints, their benefits and limitations, and the associated pricing models, organizations can strategically leverage this feature to enhance their security posture, improve application performance, and simplify their network architecture. Adhering to best practices for implementation, management, and cross-account access further maximizes the value derived from VPC endpoints, making them an indispensable component of a well-architected AWS infrastructure.

Starting: 1st of Every Month

Starting: 1st of Every Month  +91 8409492687 |

+91 8409492687 |  Contact@DevOpsSchool.com

Contact@DevOpsSchool.com